Calls from the research community and movements like the San Francisco Declaration on Research Assessment (DORA) and The Leiden Manifesto have gone a long way to raise awareness over the misuse of Journal Impact Factors (JIFs) for assessing individual research contributions. They have also stimulated discussion around responsible research assessment and the need for broader and relevant measures that consider impact beyond academia.

More than 20,000 individuals and organisations across the world have signed DORA, leading some universities to establish responsible models for faculty hiring, promotion, and tenure and new principles for research evaluation. Publishers have committed to making article-level metrics available, while funders have agreed to consider a broad range of measures for evaluating research impact.

Despite growing support for changes to research evaluation, institutions continue to foster the ‘publish or perish’ rule through their use of JIFs, h-indices and other metrics within hiring, promotion, or funding decisions. Worryingly, metrics also appear to play a role in helping universities identify jobs at risk of redundancy.

On this page

- Losing our appetite for change

- A return to metrics?

- Waning support for new impact measures

- Main challenges to change

- What are academics prepared to do?

- What will drive change?

- The data

- Download the report as a PDF

In the report

- Widening inequalities

- Research evaluation [this page]

- Academic culture

- Openness and transparency

- Role of the publisher

Losing our appetite for change

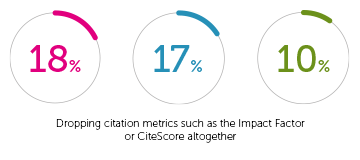

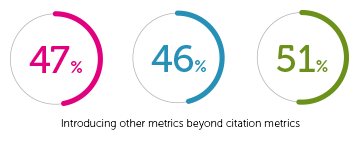

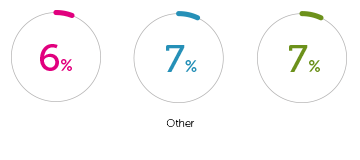

The apparent gains towards a broader set of metrics for research evaluation and general appetite for change appear to be slowing down. In this year’s survey, 47% of academics want to introduce metrics beyond citation metrics, just up on 46% in 2020, but still down on 51% in 2019. There is slightly more interest in dropping JIFs altogether – 18% in 2021, up from 17% in 2020 and 10% in 2019. However, less than a third (29%) want to change the way incentives are used to publish research, a steady drop from 30% in 2020 and 32% in 2019.

Change tenure requirements so publishing impact is not just citations and journal quality

There are wide differences of opinion at the regional level. The UK is most keen to drop citation metrics (23%) and least interested is Latin America (6%). Enthusiasm for introducing other metrics beyond citation metrics is highest in SSA (60%) and Latin America (53%) and lowest in Australasia (43%) and UK (42%). Latin American and N&WE (excluding UK) are most eager to change the way incentives are used to publish research, with 36% in each region choosing this option.

I am happy with the way things are.

A return to metrics?

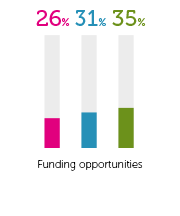

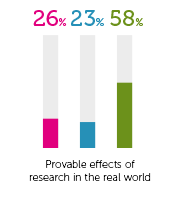

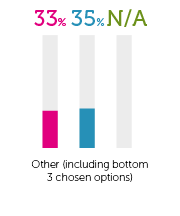

For the second year running, participants chose journal citations and impact factors as the main way research impact is currently measured – with 70% opting for this, compared to 58% in 2019 (71% in 2020). This option is most popular in S&EE at 79% and lowest in the UK at 60%, both consistent with 2020. Other areas to rank high are tenure or career advancement (32%) and funding opportunities (26%) and provable effects of research in the real world (26%).

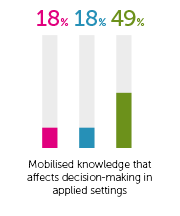

Mobilised knowledge that affects decision making is at the bottom of the choices globally (18%) with SSA the only region that had more than 1 in 4. There continues to be less focus on measurable changes in practice, policy, or behaviour, standing at 25% in 2021, slightly up from 2020 (24%) and considerably down on 2019 (59%). However, UK interest in this measure of research quality has risen to 45% from 42% in 2020.

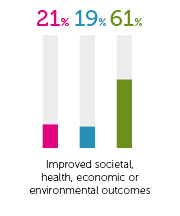

Despite the need for research to influence real change in response to the pandemic, improved societal health, economic or environmental outcomes as measures of research impact continues to remain relatively low – standing at 21% in 2021 and 19% in 2020, compared to 61% in 2019.

Waning support for new impact measures

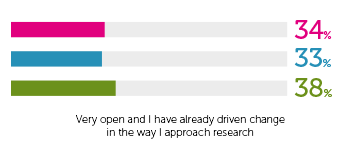

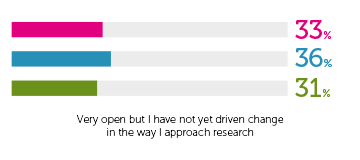

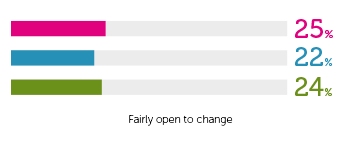

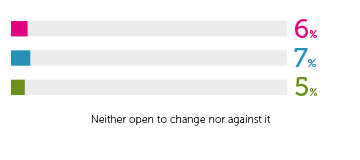

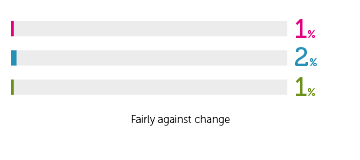

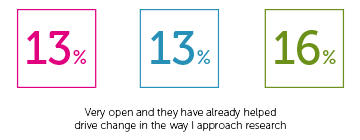

Academics remain less keen about changing the way research impact is measured than they did in 2019. 34% of global respondents in 2021 and 33% in 2020, compared to 38% in 2019 say they are very open to change and have already driven change. Academics believe their institutions are even less supportive of change than they are personally, with figures for very open to change and already driven change dropping from 16% in 2019 to 13% in 2020 and 2021.

I am judged by how many articles I publish in a constantly changing list of journals and how much external funding I bring in – doing anything else is a waste of time

In 2020, we found that SSA, ME&NA and India compared to other regions are more likely to think that people in their institutions had already driven change. However, this year India is the outlier with nearly 1 in 4 believing this and the other two regions falling. It is still the case that Europe, North America, UK, and Australasia are less likely to agree that change has been delivered but especially those in N&WE (excluding UK), where only 4% agree compared to 11% in 2020.

Main challenges to change

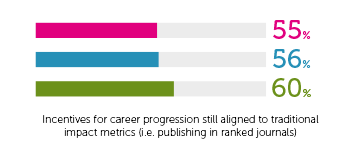

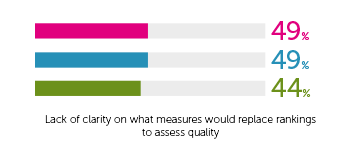

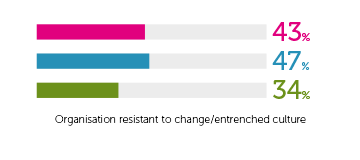

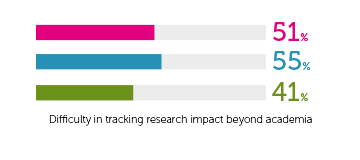

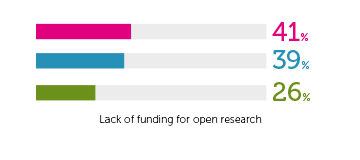

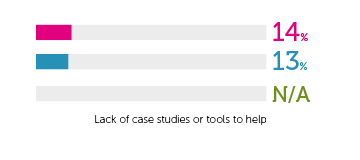

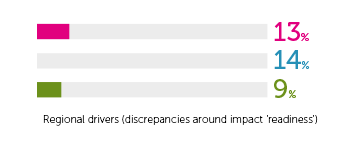

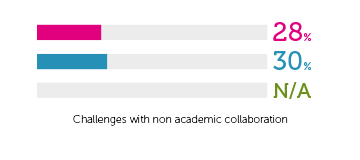

The top three barriers to change are career incentives (55%), difficulty of tracking impact beyond academia (51%) and lack of clarity on what other measures could replace rankings (49%). There is an increase in organisational resistance to change and entrenched culture – 43% in 2021, compared to 34% in 2019 – although slightly down from 2020 (43%). Lack of funding for open research appears to be more of a challenge in 2021 – 41% compared to 39% in 2020 and 26% in 2019.

At the regional level, career incentives still aligned to traditional impact metrics is most popular among those in Australasia (70%) and North America (65%).

Most authentic interest in impact and assessing impact in ways that don’t just add more workload and stress for busy, stressed and undervalued academics

Unlike in 2020, the UK isn’t top in any challenges and features nearer to the average. This year, North America scores the highest for organisation resistant to change (61%), lack of clarity on what measures would replace rankings (63%) and difficulty in tracking research impact beyond academia (66%).

There is a significant difference of opinion between genders when it comes to incentives for career progression still aligned to traditional impact metrics, with 66% of women, compared to 51% of men choosing this option – the difference has slightly increased since 2020.

What are academics prepared to do?

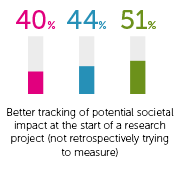

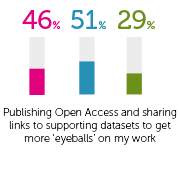

To drive change, 46% academics globally are willing to publish open access and share links to supporting datasets – this was also the top choice in 2020 (51%). Next popular is publishing non-traditional content if the rewards mechanisms were in place (43%). The low- or middle-income regions are least attracted to publishing non-traditional content with Europeans, North Americans and Australasians choosing this around two-thirds of the time. There are also different levels of support among the genders for publishing non-traditional content – male academics are less keen (40%) than female (51%).

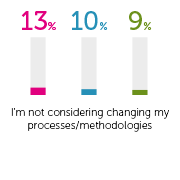

Changing their processes/methodologies is the option least favoured by academics, with only 13% globally backing this option.

I want to see complete change – moving away from metrics to look at the intrinsic as well as extrinsic value of all research. It’s about quality of research, not how many tweets you get

There is a significant difference of opinion between genders when it comes to incentives for career progression still aligned to traditional impact metrics, with 66% of women, compared to 51% of men choosing this option – the difference has slightly increased since 2020.

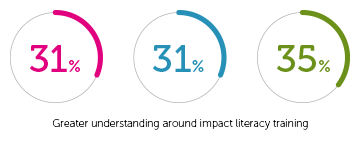

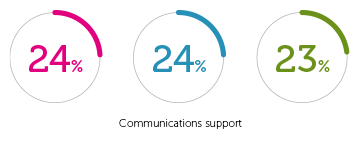

What will drive change?

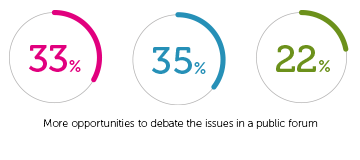

Academics believe that more opportunities for collaboration with industry and practice is key for driving change, with 60% choosing this option. Next important is having more publishers make research open access (50%). A third are in favour of having more debate of the issues in a public forum, followed closely by impact literacy training (31%).

What change? Honestly, neither the industry, nor the society, require the volume of research that academia conducts. Most of the research is written by experts and for experts, and no one else cares

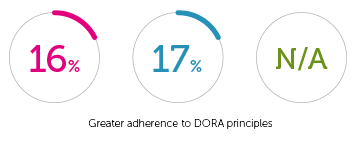

There are different levels of support for the options depending on academics’ career stage. Students are particularly in support of driving change through impact literacy training (42%), collaboration between industry and practice (73%), and communications support (27%). Five of the six highest chosen options are from the early career stages, with only greater adherence to DORA principles (19%) gaining more support from those in the mid-career stage of 16-20 years post PhD.

The system is so broken; I cannot think of any one repair that would help at all

Have we 'hit a wall' on pushing for broader metrics?

The Time for change report suggests a slowdown of interest in a broader set of metrics for research evaluation, and a lethargy for change.

Dr Faith Welch, Research Impact Manager at the University of Auckland in New Zealand, and Steve Lodge, Head of Services at Emerald Publishing, talk about what we are seeing in the sector, and where we should go from here

Impact services

As a publisher, we contribute to a more impact-literate research ecosystem by actively offering impact support to institutions.

Our Impact Services platform has been created in collaboration with innovative thought leaders and impact strategists, all aiming to make 'impact culture' a daily reality for researchers and institutions alike – with useful tools including an impact planner, a skills toolkit and a healthcheck for institutions.

Sign up below and we'll be in touch about arranging a demonstration.

The data

How important is demonstrating impact of research on society to...?

| Statement | 2021 | 2020 | 2019 | 2018 |

|---|---|---|---|---|

| You personally | 8 | 8 | 8 | 8 |

| Your university | 8 | 8 | 8 | 7 |

| Funders | 8 | 8 | 8 | 8 |

| Policymakers | 8 | 8 | 7 | 7 |

| Society | 8 | 8 | 7 | 7 |

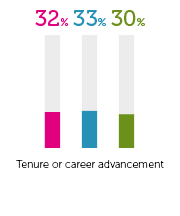

How is the quality of your research impact currently measured?

| Method | % 2021 | % 2020 | % 2019 |

|---|---|---|---|

| Journal citations and impact factors | 70 | 71 | 58 |

| Tenure or career advancement | 32 | 33 | 30 |

| Funding opportunities | 26 | 31 | 35 |

| A measurable change in practice, policy or behaviour | 25 | 24 | 59 |

| Provable effects of research in the real world | 26 | 23 | 58 |

| Improved societal health, economic or environmental outcomes | 21 | 19 | 61 |

| Mobilised knowledge that affects decision-making in applied settings | 18 | 18 | 49 |

| Other (including bottom 3 chosen options) | 33 | 35 | n/a |

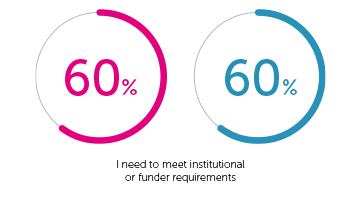

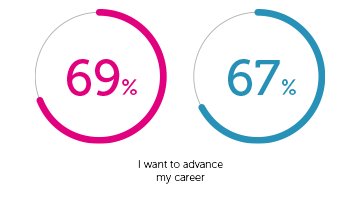

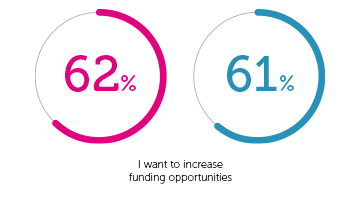

How important are the following factors in helping achieve broader impact with your work?

| Statement | % 2021 | % 2020 |

|---|---|---|

| I need to meet institutional or funder requirements |

60 | 60 |

| I want to advance my career |

69 | 67 |

| I want to increase funding opportunities |

62 | 61 |

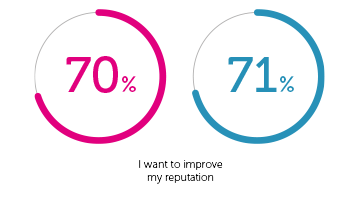

| I want to improve my reputation |

70 | 71 |

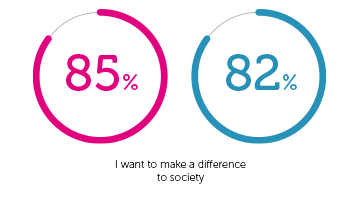

| I want to make a difference to society |

85 | 82 |

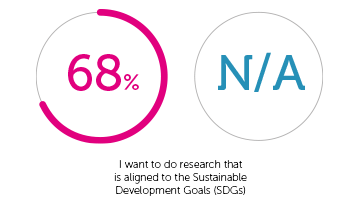

| I want to do research that is aligned to the Sustainable Development Goals (SDGs) |

68 | n/a |

How strongly do you support the idea of changing the way research impact is measured?

| Statement | 2021 | 2020 | 2019 |

|---|---|---|---|

| Very open and I have already driven change in the way I approach research | 34 | 33 | 38 |

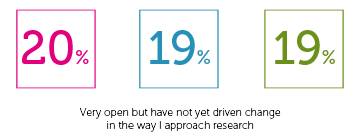

| Very open but I have not yet driven change in the way I approach research | 33 | 36 | 31 |

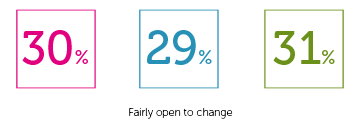

| Fairly open to change | 25 | 22 | 24 |

| Neither open to change nor against it | 6 | 7 | 5 |

| Fairly against change | 1 | 2 | 1 |

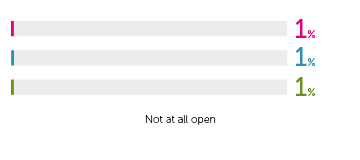

| Not at all open | 1 | 1 | 1 |

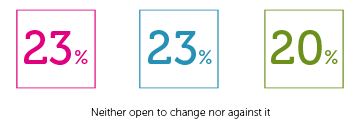

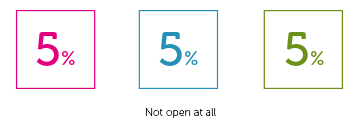

How supportive/interested are those in your broader institution in driving change when it comes to other ways to measure research impact?

| Statement | % 2021 | % 2020 | % 2019 |

|---|---|---|---|

| Very open and they have already helped drive change in the way I approach research | 13 | 13 | 16 |

| Very open but have not yet driven change in the way I approach research | 20 | 19 | 19 |

| Fairly open to change | 30 | 29 | 31 |

| Neither open to change nor against it | 23 | 23 | 20 |

| Fairly against change | 9 | 11 | 10 |

| Not open at all | 5 | 5 | 5 |

What main change would you like to see in the way research quality is measured?

| Statement | 2021 | 2020 | 2019 |

|---|---|---|---|

| Dropping citation metrics such as the Impact Factor or CiteScore altogether | 18 | 17 | 10 |

| Introducing other metrics beyond citation metrics | 47 | 46 | 51 |

| Changing the way incentives are used to publish research work | 29 | 30 | 32 |

| Other | 6 | 7 | 7 |

Which of the following do you consider to be the biggest ‘challenges’ of changing the way research impact is assessed??

| Statement | 2021 | 2020 | 2019 |

|---|---|---|---|

| Incentives for career progression still aligned to traditional impact metrics (i.e. publishing in ranked journals) | 55 | 56 | 60 |

| Lack of clarity on what measures would replace rankings to assess quality | 49 | 49 | 44 |

| Organisation resistant to change/entrenched culture | 43 | 47 | 34 |

| Difficulty in tracking research impact beyond academia | 51 | 55 | 41 |

| Lack of funding for open research | 41 | 39 | 26 |

| Lack of case studies or tools to help | 14 | 13 | n/a |

| Regional drivers (discrepancies around impact ‘readiness’) | 13 | 14 | n/a |

| Challenges with non academic collaboration | 28 | 30 | n/a |

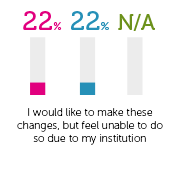

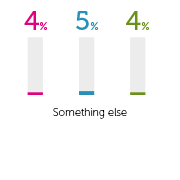

When it comes to your research, what types of change would you consider implementing??

| Statement | 2021 | 2020 | 2019 |

|---|---|---|---|

| Publishing non-traditional content (short form, policy notes, blogs etc) if the rewards mechanisms for this were in place | 43 | 46 | 48 |

| Better tracking of potential societal impact at the start of a research project (not retrospectively trying to measure) | 40 | 44 | 51 |

| Publishing open access and sharing links to supporting datasets to get more ‘eyeballs’ on my work | 46 | 51 | 29 |

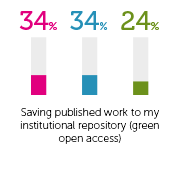

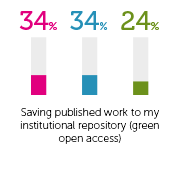

| Saving published work to my institutional repository (green open access) | 34 | 34 | 24 |

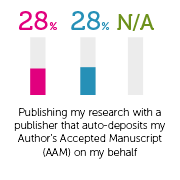

| Publishing my research with a publisher that auto-deposits my Author’s Accepted Manuscript (AAM) on my behalf | 28 | 28 | n/a |

| I’m not considering changing my processes/methodologies | 13 | 10 | 9 |

| I would like to make these changes, but feel unable to do so due to my institution | 22 | 22 | n/a |

| Something else | 4 | 5 | 4 |

In your opinion what are the best way(s) to enable change to happen? (% of times chosen in top 3)

| Statement | 2021 | 2020 | 2019 |

|---|---|---|---|

| Greater understanding around impact literacy training | 31 | 31 | 35 |

| More opportunities for collaboration between industry and practice | 60 | 63 | 61 |

| Communications support | 24 | 24 | 23 |

| Greater adherence to DORA principles | 16 | 17 | n/a |

| More publishers making research open access | 50 | 52 | 35 |

| More opportunities to debate the issues in a public forum | 33 | 35 | 22 |

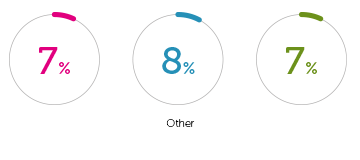

| Other | 7 | 8 | 7 |

Download the report as a PDF

You can get a PDF version of our Time for change report by filling in this form; you'll be able to download the report instantly.